PUC debate over design-day methods: data window, probability and model validation

January 21, 2026 | Public Utilities Commission, Governor's Boards and Commissions, Organizations, Executive, Colorado

This article was created by AI summarizing key points discussed. AI makes mistakes, so for full details and context, please refer to the video of the full meeting. Please report any errors so we can fix them. Report an error »

A technical portion of the Jan. 20 Public Utilities Commission hearing focused on how Public Service calculates design-day temperatures and validates its hydraulic models.

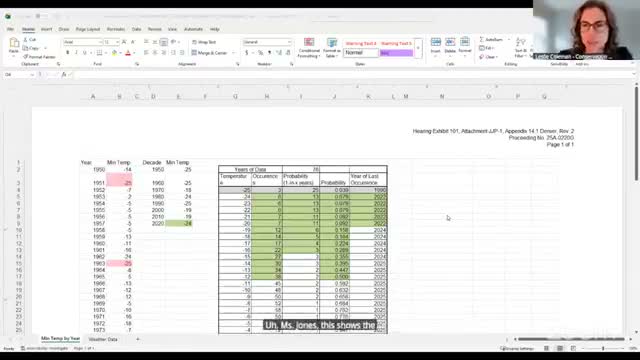

Company witness Grace Jones explained that the utility uses a long historical temperature dataset (in the record reaching back to 1950) and recalculates design-day probabilities annually. Counsel for Conservation Advocates ran a sensitivity removing pre-2000 data and applying a 1-in-25 probability (a stipulation advocated by some parties), showing that the Denver design-day value would shift in the sample spreadsheet from -25'F to a colder or warmer value depending on tie-breaking rules. Jones said the company's established tie-breaking practice produces the numerically nearest value to the requested probability and that she would select the coldest temperature when the computed probability exceeds the target.

Commissioners and intervenors asked about model validation using observed cold events. Jones confirmed the company calibrates models annually using flow and pressure observations at regulator stations and other system points, and she agreed to have the company propose a deliverable of correlation data (modeled vs observed) for the Denver extreme-day event and other weather zones to show how closely the model predicted realized flows. Commissioners requested aggregated node-level comparisons and transparency about any missing data.

The exchange exposed differences among stakeholders on whether shorter, recent weather windows better capture warming trends and whether such choices materially change capacity planning. Jones said the company is willing to run additional sensitivities but defended a long dataset as best practice for probabilistic analysis of rare cold events.

Company witness Grace Jones explained that the utility uses a long historical temperature dataset (in the record reaching back to 1950) and recalculates design-day probabilities annually. Counsel for Conservation Advocates ran a sensitivity removing pre-2000 data and applying a 1-in-25 probability (a stipulation advocated by some parties), showing that the Denver design-day value would shift in the sample spreadsheet from -25'F to a colder or warmer value depending on tie-breaking rules. Jones said the company's established tie-breaking practice produces the numerically nearest value to the requested probability and that she would select the coldest temperature when the computed probability exceeds the target.

Commissioners and intervenors asked about model validation using observed cold events. Jones confirmed the company calibrates models annually using flow and pressure observations at regulator stations and other system points, and she agreed to have the company propose a deliverable of correlation data (modeled vs observed) for the Denver extreme-day event and other weather zones to show how closely the model predicted realized flows. Commissioners requested aggregated node-level comparisons and transparency about any missing data.

The exchange exposed differences among stakeholders on whether shorter, recent weather windows better capture warming trends and whether such choices materially change capacity planning. Jones said the company is willing to run additional sensitivities but defended a long dataset as best practice for probabilistic analysis of rare cold events.

View the Full Meeting & All Its Details

This article offers just a summary. Unlock complete video, transcripts, and insights as a Founder Member.

✓

Watch full, unedited meeting videos

✓

Search every word spoken in unlimited transcripts

✓

AI summaries & real-time alerts (all government levels)

✓

Permanent access to expanding government content

30-day money-back guarantee